For the last several years we have been witnessing some major social media ugliness that has brought us to a breaking point over security, privacy, and—ultimately—the safeguarding of democracy itself.

Now, we find ourselves faced with major questions about how far we are willing to go—or should go—in terms of moderating social media content and censoring posts, and what exactly are we protecting ourselves from?

On the lighter side of this, we have groups fighting for greater transparency among social media giants as to how they deal with user content, who they decide to censor, and why.

A coalition of nonprofit digital rights groups headed by the Electronic Frontier Foundation (EFF) has come up with a list of basic moderation standards called the Santa Clara Principles on Transparency, a document that sets the minimum standards for how to treat user content online, calling on tech companies such as Facebook, Twitter and Google to disclose details concerning “content removals, account suspensions, appeals, and other practices that impact free expression”.

Basically, they want you to know why Facebook removed your post.

The purpose of this document is to bring more transparency to social media sites when it comes to takedowns. The idea involves having Facebook, Google and other platforms issue quarterly reports on how many user posts they remove, along with detailed explanations for users as to why their content was censored and what guideline you might have violated. Related: The U.S. Industry Losing Billions In The Iran Fallout

“What we’re talking about is basically the internal law of these platforms,” says Kevin Bankston, director of Open Technology Institute, who worked on this document. “Our goal is to make sure that it’s as open a process as it can possibly be.”

It sounds simple enough, but this level of moderating could be a significant challenge for companies, though Facebook has its own community standards guidelines as of last month.

These rules explain how content moderators deal with objectionable material, such as violence and nudity. It has also created its first formal appeals process for users who believe they’ve been suspended in error.

Despite Facebook's efforts to change something in its moderating policy, EFF thinks there is still more to be done, calling current practices "shoddy opaque private censorship."

"Our goal is to ensure that enforcement of content guidelines is fair, transparent, proportional, and respectful of users' rights," said EFF senior staff attorney Nate Cardozo.

Although still short on transparency, YouTube is closer to the Santa Clara rules than others. It’s had public guidelines from the beginning, as well as a notice and appeals process.

YouTube's first quarterly moderation report released in April showed that 8.2 million videos were removed during the last quarter of 2017; but it still lacks details on whether the content was flagged by the automated system or not. Also, consider that almost 300 hours of video are uploaded to YouTube every single minute.

But it’s not just about violence and pornography—social media moderating is about maintaining a democracy, and keeping those who would undermine it from doing so. That doesn’t just mean free speech and no censorship, either, as we all learned in the Facebook scandal via Cambridge Analytics.

The mass harvesting of user data for political, or geopolitical means, is not free speech, and this is where things get tricky. Do we paradoxically need censorship to protect free speech?

It’s also about fake news.

Earlier this month, tech titans and experts sat down to discuss just how to moderate in a world rife with fake news.

In a panel discussion hosted by the National Constitution Center, law professor Nathaniel Persily challenged to audience to try to come up with a “fake news statute”.

“One thing you realize is that most of the stuff that you would end up regulating through a disinformation bill is not the problematic stuff that I think is going on in these platforms,” he said.

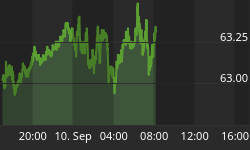

Related: What’s Behind The Decline In Bitcoin Volatility?

Or as Stanford University put it: “Indeed, the digital tools that can help democracy thrive are also the ones that can undermine it. Now the challenge lies with how to safeguard democratic values in a digital era.”

Some—especially after the Facebook scandal—aren’t so optimistic that the tech giants are willing to do what it takes to safeguard democracy.

Gizmodo suggests that Google, Facebook, Twitter and the like want to be the “gatekeepers” of democracy, but don’t want to take the responsibility that comes along with this.

The question is: Do we want tech titans to be responsible gatekeepers? As Twitter’s senior public policy manager Nick Pickles noted: “As technology platforms, it’s very dangerous for us to get into that space [of moderating views expressed by users], because either you’re asking us to change the information that we provide to people based on an ideological view, which I don’t think is what people want tech companies to be.”

By Michael Kern for Safehaven.com

More Top Reads From Safehaven.com: