Something peculiar has been happening during the current economic recovery cycle. Nine years into the economic expansion, unemployment rates have fallen quite dramatically: The May 4 unemployment reading of 3.9 percent was so low that the country can be said to be technically in full employment. Yet real wage growth has remained elusive.

But what is full employment, or unemployment, for that matter?

Official Unemployment

To the average Main Street guy, the idea of full employment implies that everyone has a job and the jobless rate is zero.

The official definition of full employment, however, differs significantly from that common assumption.

The Bureau of Labor Statistics defines unemployment as when a person, 16 years and older, who is actively searching for work is unable to land a job. People not in the labor force, retirees and students are excluded from the tally.

Unfortunately, full unemployment has never been achieved in any country in the world, nor is it likely to ever be achieved. That's because in any economy there will always be a flux in demand and supply of different skills, meaning some are oversupplied while others are undersupplied at varying times. Related: Volkswagen Looks To Take On Tesla In The EV Race

The lowest unemployment rate ever achieved in the U.S. was 1.2 percent back in 1944 at the height of WWII, when millions of men were drafted to fight for the country, and women filled available jobs locally.

Still, the latest unemployment figure of 3.9 percent ranks as the second-lowest in nearly 50 years.

(Click to enlarge)

Source: Federal Reserve Bank of St. Louis

Meanwhile, classic economists define full employment as any time the jobless rate falls below the Non-Accelerating Inflation Rate of Unemployment (NAIRU).

The Congressional Budget Office currently puts NAIRU at 4.6 percent, meaning full employment has already been achieved by that yardstick.

Why Wages Remain Stagnant

Full employment is usually accompanied by rising wages for the simple reason that labor demand starts outstripping supply, leading to a situation where employers have to pay higher wages to retain their existing workers to avoid losing them to competitors and also to attract new ones.

Unfortunately, the mere hint of rising wages has been raising a red flag at the Federal Reserve, the country's central bank. Higher wages usually lead to higher prices and inflation. The Fed has a dual mandate to foster high employment and stable prices, and usually acts preemptively to prevent the economy from overheating by raising interest rates as soon as inflation starts to creep up. This slows down the economy, leading to workers losing jobs and rendering them unable to negotiate better wages.

The end result: higher wages becomes more of a dream than reality.

Related: China And India Could Send Goal Soaring

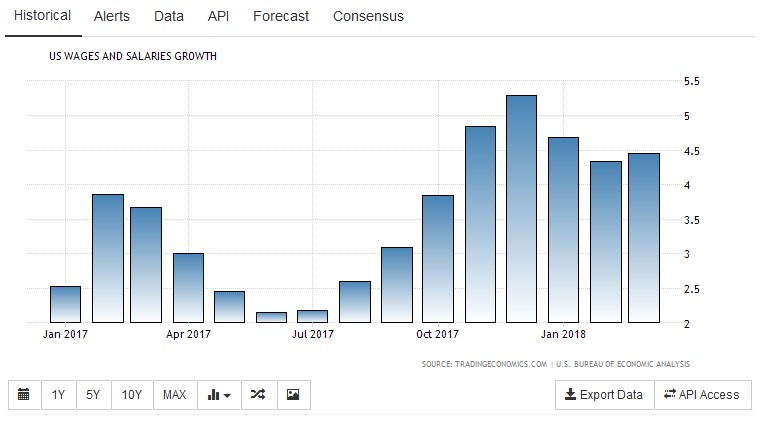

Real wage growth (after adjusting for inflation) clocked in at a near-invisible 0.6 percent in 2017, while unit labor costs were up an anemic 0.2 percent. That compares very poorly with the average 4-percent wage growth recorded during past expansion cycles.

We saw this clearly in March when the Fed rushed to hike interest rates shortly after the Bureau of Labor Statistics reported impressive wage growth.

(Click to enlarge)

Source: TradingEconomics

Meanwhile, weak unions have been weighing in because they are unable to negotiate better pay packages for their members. Compared to their peers a couple of decades ago, the American worker is criminally underpaid. Indeed, there are estimates that workers have been collectively underpaid by about $10 trillion since 2000.

Wages and inflation have lately seen a gentle uptick. Let's just hope that the Fed does not get overzealous with interest rate hikes and end up tamping down wage gains.

By Alex Kimani for Oilprice.com

More Top Reads From Safehaven.com: