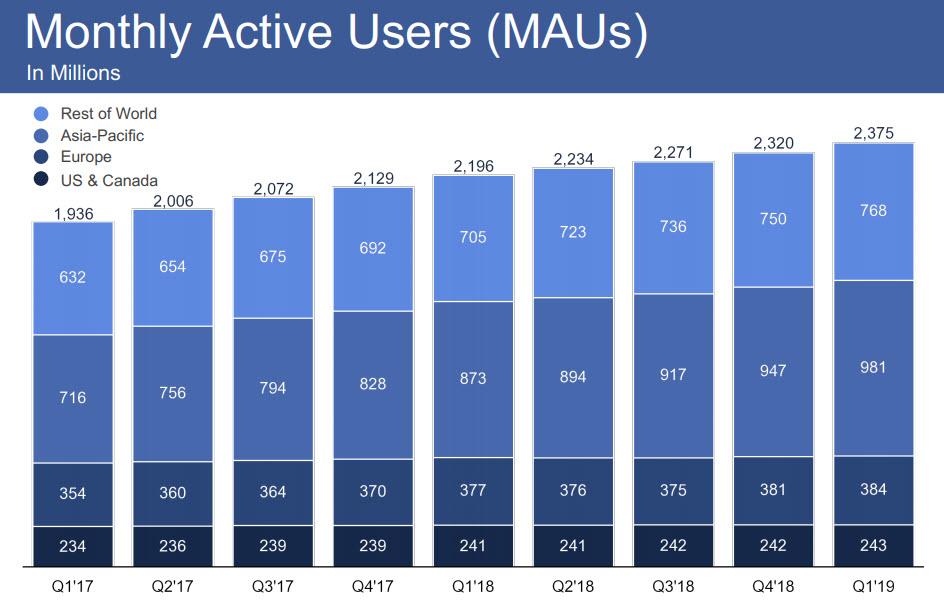

On one hand, Facebook - the world's biggest social media company - represented that it has just under 2.4 billion monthly active users from across the globe.

On the other hand, Facebook on Thursday said it has removed a record 2.2 billion fake accounts in the first quarter, which while putting the former number to doubt as it is almost as big as Facebook's entire universe of "active" users, also demonstrates how the company is battling an avalanche of what Bloomberg called "bad actors" trying to undermine the authenticity of the world’s largest social network... alternatively one wonders just how credible is the 2.4 billion MAU number when there is such an onslaught of fake accounts. In the last quarter of 2018, Facebook disabled just over 1 billion fake accounts and 583 million in the first quarter of last year. According to Facebook, the vast majority of such "fake" accounts are removed within minutes of being created, so they’re not counted in Facebook’s closely watched monthly and daily active user metrics, although there is no way to verify any of these claims of course.

Facebook also shared a new metric in Thursday’s report: the number of posts removed that were promoting or engaging in drug and firearm sales, and in the first quarter of 2019, Facebook pulled more than 1.5 million posts from these categories. According to Bloomberg, Tuesday’s report "is a striking reminder of the scale at which Facebook operates - and the size of its problems."

The releases were made as part of the company's third ever content transparency report, a bi-annual document that outlines Facebook’s efforts to remove posts and accounts that violate its policies. As part of the report, it said that it was getting better at finding and removing other troubling content like hate speech in the process.

Related: How Long Will Stock Market Euphoria Last?

There were some additional insights into its AI algorithms, which apparently work well for some issues, like graphic and violent content; as a result Facebook detects almost 97% of all graphic and violent posts it removes before a user reports them to the company. On the other hand, Facebook is still terrible at detecting the type of graphic or violent content that really matters, such as the "promotional" variety used in live videos, a "blind spot" that allowed a shooter to live broadcast his killing spree at a New Zealand Mosque earlier this year.

The company said that its software also hasn’t worked as well for more nuanced categories, like hate speech, where context around user relationships and language can be a big factor. It is also why Facebook has cracked down on all aspects of speech and expression, having hired third-party scanners, although it has now been documented that a majority of Facebook's "banned" content tends to come from conservative sources.

Still, Facebook says it’s getting better. Over the past six months, 65% of the posts Facebook removed for pushing hate speech were automatically detected. A year ago, that number was just 38%.

Finally, as part of a blog post published earlier today by Alex Schultz, VP of Analytics, discussing "How Facebook Measures Fake Accounts?", the company made the following representations:

When it comes to abusive fake accounts, our intent is simple: find and remove as many as we can while removing as few authentic accounts as possible. We do this in three distinct ways and include data in the Community Standards Enforcement Report to provide as full a picture as possible of our efforts:

1. Blocking accounts from being created: The best way to fight fake accounts is to stop them from getting onto Facebook in the first place. That’s why we’ve built detection technology that can detect and block accounts even before they are created. Our systems look for a number of different signals that indicate if accounts are created in mass from one location. A simple example is blocking certain IP addresses altogether so that they can’t access our systems and thus can’t create accounts.

What we measure: The data we include in the report about fake accounts does not include unsuccessful attempts to create fake accounts that we blocked at this stage. This is because we literally can’t know the number of attempts to create an account we’ve blocked as, for example, we block whole IP ranges from even reaching our site. While these efforts aren’t included in the report, we can estimate that every day we prevent millions of fake accounts from ever being created using these detection systems.

2. Removing accounts when they sign-up: Our advanced detection systems also look for potential fake accounts as soon as they sign-up, by spotting signs of malicious behavior. These systems use a combination of signals such as patterns of using suspicious email addresses, suspicious actions, or other signals previously associated with other fake accounts we’ve removed. Most of the accounts we currently remove, are blocked within minutes of their creation before they can do any harm.

What we measure: We include the accounts we disable at this stage in our accounts actioned metric for fake accounts. Changes in our accounts actioned numbers are often the result of unsophisticated attacks like we saw in the last two quarters. These are really easy to spot and can totally dominate our numbers, even though they pose little risk to users. For example, a spammer may try to create 1,000,000 accounts quickly from the same IP address. Our systems will spot this and remove these fake accounts quickly. The number will be added to our reported number of accounts taken down, but the accounts were removed so soon that they were never considered active and thus could not contribute to our estimated prevalence of fake accounts amongst monthly active users or our publicly stated monthly active user number or even any ad impressions.

3. Removing accounts already on Facebook: Some accounts may get past the above two defenses and still make it onto the platform. Often, this is because they don’t readily show signals of being fake or malicious at first, so we give them the benefit of the doubt until they exhibit signs of malicious activity. We find these accounts when our detection systems identify such behavior or if people using Facebook report them to us. We use a number of signals about how the account was created and is being used to determine whether it has a high probability of being fake and disable those that are.

What we measure: The accounts we remove at this stage are also counted in our accounts actioned metric. If these accounts are active on the platform, we would also account for them in our prevalence metric. Prevalence of fake accounts measures how many active fake accounts exist amongst our monthly active users within a given time period. Of the accounts we remove, both at sign-up and those already on the platform, over 99% of these are proactively detected by us before people report them to us. We provide that data as our metric of proactive rate in the report.

We believe that of all the metrics, prevalence of fake accounts is the most important to focus on.

By Zerohedge.com